Some time ago I posted Just a thought: free distributed search?, suggesting that maybe relying on the centralized approach of search engine companies like Google was unwise, and that some kind of decentralized approach could work better for searching. Recently, I was directed to an actual attempt to implement this kind of strategy called Majestic-12. It's a UK-based project which applies the distributed computing model made famous by SETI@home to the problem. Isn't that amazing?

From the site's published rationale for the project:

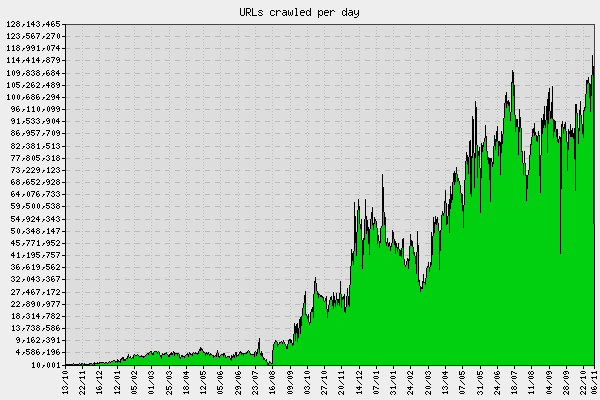

So what about search engines?. There are millions web sites out there, with billions of pages and so far only a handful of huge companies were able to create a search engine that can provide relevant information to the users. Big companies control the entry point to the data you seek, and neither you nor web masters who run the sites have a say in the matter.. How does Majestic-12 fit into all this?. Majestic-12 is developing a search engine scalable to billions of web pages that is based on support by the community. Since the task of building a World Wide Web search engine is so huge, we have chosen to make Majestic-12 Distributed Search Engine based on the concept of distributed computing. The idea being that many machines work on one task to get it done quicker than one large machine alone. One of the biggest challenges with the search engines is actually getting billions of pages, and to do this cost effectively we have created a client software called MJ12node that can be run on otherwise idle computers. This concept was used successfully by projects like SETI@HOME and distributed.net.. MJ12node software combines machines from all around the globe to crawl, collate and then send back it's findings to the master server. The crawled data will be analysed (indexed) and added to the Majestic-12 search engine. The result? Hopefully the biggest crawl of the web, and perhaps even the most up to date search engine of its time..

So I guess the answer to my question is "Yes, someone is already working on it". I never cease to be amazed by the creativity and industry of free software developers!